Explore Our Latest Newsletter – April 2026 Edition

Explore Our Latest Newsletter – April 2026 Edition

In this edition of our Quarterly Newsletter, we share key updates and perspectives on enterprise AI, SAP Business Data Cloud, BW modernization, SAP + Databricks, planning transformation, and modern data architecture.

You’ll also find highlights from SAP Sapphire Orlando 2026, upcoming event updates, and practical resources to help organizations build trusted, AI-ready data foundations.

A Key to AI Success: The Data Foundation

A Key to AI Success: The Data Foundation

AI success doesn’t start with algorithms. It starts with data.

In today’s competitive landscape, organizations are investing heavily in AI, yet many struggle to see real returns. Why? Because their data foundation isn’t ready. Clean, integrated, and accessible data is the backbone of every successful AI initiative.

This guide explores why building the right data foundation is essential for unlocking AI’s full potential. From overcoming silos to streamlining data pipelines, we’ll uncover the practical steps organizations need to take to turn data into actionable intelligence and drive meaningful outcomes with AI.

Let’s dive into how a strong data foundation becomes the true catalyst for AI success.

Please complete the form to access the whitepaper:

Enabling Business Friendly Semantics in Power BI from SAP Datasphere.

Enabling Business Friendly Semantics in Power BI from SAP Datasphere.

As a large commercial construction company, with a long history in North America, Barton Malow is committed to building People, Projects, and Communities. While Barton Malow has been in business for over 100 years, they are not resting comfortably with past processes. Rather, Barton Malow is on a mission to transform the construction industry through innovation and increased efficiency in the building process.

To that end, they are focused on leveraging data and having a data-driven culture to aid them in their mission. However, on the journey they encountered a bit of a road bump with SAP Datasphere and Power BI.

THE PROBLEM

To get started, here is a bit of background on the situation. The Barton Malow team was facing a significant challenge with the inability to automatically feed business metadata from SAP Datasphere into Power BI. In addition, Datasphere does not check for duplication of business names (only technical names are checked) and if duplicate business names are in the same source, the Power BI load failed.

This limitation necessitated the implementation of a manual process for gathering and loading data into Power BI daily. The workaround consisted of manually pulling the metadata into a JSON file then putting through a PBI rename step. This not only increased the risk of errors but also consumed valuable time and resources, hindering the company’s ability to leverage data-driven insights effectively and efficiently.

THE SOLUTION

To overcome these problems, the Tek team worked with them to thoroughly understand the current situation and design an automated solution to the issues. The solution leveraged the HANA cloud directly using Business Application Studio and modeling HDI container based calculated views, which were then accessed by Power BI. This approach enabled the retrieval of business names without manual intervention.

THE RESULTS

At the end of the day, the outcome was a solution that provided:

- Improved business process efficiency with the elimination of manual processes.

- Seamless availability of business names in Power BI to support self-service and data-driven culture.

Ready to move your data strategy forward and unlock the full potential of your data? This client success story is just one example of how we help businesses modernize their data architectures, streamline operations, and drive faster decision-making.

Let us help you achieve the same success! Contact us today to discuss how we can support your migration to SAP Datasphere. Contact TEK – Tek Digital Transformations

Streamlining Automotive Incentives Planning

By – Merri Beckfield

Streamlining Automotive Incentives Planning

Most car buyers likely aren’t aware of the work put into planning the incentives we often see advertised. That is, those special offers (such as cash back or an amazing 0% interest rate) encouraging each of us to purchase a new vehicle. The effort that car manufacturers put into this is quite significant. The number of variables that need to be considered to come up with an incentive offering that is competitive, cost effective, and drives sales is somewhat daunting. Factors beyond the forecast of demand, costs, and manufacturers margin such as the impact to the margin of the car dealer and varying market conditions by state create added complexity.

In collaboration with a large-scale automotive manufacturer, we tackled the challenge of streamlining the automotive incentive planning process. By implementing an end-to-end solution which leveraged predictive techniques, automation, and strong change management the team was able to streamline the process: reducing the duration from weeks to days and delivering an 80% reduction in manual interventions.

“Our planners love the new experience and we as a leadership team, we are thrilled with the improved speed to market.” – Director of FP&A

THE PROBLEM

Before diving in, it’s important to understand the challenges that were being faced by the manufacturer driving the need to do something. The supply chain was in a state of turmoil, and rising banking interest rates were significantly impacting auto incentives. Financial closing was a cumbersome and complicated process due to the intricacies of dealer contract terms and dealer stock. The organization was finding it nearly impossible to react to market conditions and adapt new promotions to the market without engaging in heavy manual labor. Incentive planning was a time-consuming and manual task, lacking the flexibility needed to keep up with the fast-paced market.

THE VISION

To improve the situation, the auto manufacturer clearly defined their vision and objectives. First, they wanted to tackle the entire incentives lifecycle, ensuring that the evolving incentives management systems could deliver meaningful business value across all system elements. A major focus was enabling granular geographic planning, allowing for more precise program design and targeted deployment. Specifically, the ability to plan targeted incentive offers down to the region or state level. Second, they wanted to streamline operations via automated program execution integrated with financial systems, which would lead to faster, more reliable incentive rollouts. This needed to include the ability to include considerations around dealer stock in the closing process. Finally, they sought to enhance post-mortem program performance analysis, enabling their brands to effectively balance volume and profit margins while achieving both brand and corporate sales objectives.

THE SOLUTION

To overcome the challenges, together the team came up with a strategy and plan to develop an end-to-end incentives life cycle planning application which considered all the key variables. The solution not only leveraged predictive analytics to create a granular plan by geography to the region/state level, but it also leveraged workflows, along with automation to streamline the planning process.

One key to success of the solution was leveraging SAP Analytics Cloud as the single enterprise planning tool. By providing end-to-end capabilities in a “one stop shop”, manual interventions were minimized. Planning duration was reduced from weeks to hours.

From a technical point of view, the technology powering the solution was SAP Analytics Cloud for the planning functions. Data harmonization was done in BW4HANA. To achieve, VIN level planning write back functionality to BW4HANA was leveraged.

From a business point of view, the following capabilities of the solution were transformative:

- Including dealer stock considerations in the financial close process.

- Having flexibility in managing dealer margins.

- Being able to plan and create targeted offers down to the state level.

THE RESULTS

With a remarkable 80% reduction in manual tasks, the team significantly minimized manual interventions, allowing planners to focus on more strategic initiatives. The application has enabled effective budget allocations and provided real-time spend visibility, which means users can make informed decisions more rapidly.

Users are enjoying an improved experience thanks to the seamless integration of sales, incentive, and financial planning, along with automated financial adjustments that streamlined processes. Collaborating with stakeholders to design effective workflows has paid off, as users now can analyze and plan incentive budgets much more efficiently.

Plus, reconciling incentives and optimizing programs has never been easier! The flexibility to add new planning models on-the-fly and personalized reporting options, complete with filters for models and even VINs, empower users to align plans across programs and measure their effectiveness.

Overall, this application has not only enhanced operational efficiency but also fostered a culture of collaboration and data-driven decision-making.

“The partnership between our organization, TEK and SAP, was incredible.

We were able to leverage SAP Analytics Cloud to modernize and automate a process that was broken and expensive.

….

TEK brought in the best-in-class experts with deep automotive industry experience and thought leadership”

Director Finance and Planning

If you are facing a similar planning challenge (regardless of industry), let us help you achieve the same success! Contact us today to discuss.

Contact TEK – Tek Digital Transformations

Got Data, but No AI? Why Your Data Architecture Might Be the Problem

Got Data, but No AI?

Are you facing this too?

It’s more common than you think.

A company kicks off an AI project.

The budget’s approved. The latest tools are in place. A smart, capable data team is ready to roll.

Everyone’s excited—AI will predict problems, streamline decisions, and cut costs.

But a few months in… things start to stall.

The ideas looked great on paper—but in practice? Nothing really works.

So, what went wrong?

It’s not the people.

It’s not the tools.

It’s not the money.

The real roadblock? The data.

It’s messy. Disconnected. Spread across silos.

And that makes it nearly impossible for AI to deliver real results.

Having Data Isn’t the Same as Using Data

These days, most companies have plenty of data. Sales records, customer details, machine logs, website clicks the list goes on. But here’s the problem: that data often lives in different systems. The sales team can’t easily see what the operations team has. The finance data is stored separately. Different formats, different rules, no easy way to connect it all.

So, when you try to use AI, it struggles. It either can’t find what it needs, or it ends up working with bad, confusing data. And that means the insights it produces don’t help the business move forward.

Why Your Data Structure Matters So Much

Think of your data like building blocks. If they don’t fit together properly, you can’t build anything strong no matter how advanced your AI tools are.

Here’s a simple comparison that shows what a weak setup looks like versus a strong one that’s ready for AI:

Weak Data Setup | Strong Data Setup |

Data stuck in silos, hard to combine | Data connected across teams and systems |

Outdated or messy data | Clean, consistent, high-quality data |

Slow, manual preparation | Automated data flows ready for AI |

Hard to get current info | Real-time data availability |

Risk of compliance issues | Strong governance and secure practices |

When your data is structured well, AI can deliver the insights, predictions, and automation you want.

The Hidden Costs of a Weak Data Setup

When companies don’t focus on fixing their data structure, they end up paying for it in other ways. Teams spend too much time just cleaning and organizing data instead of building AI models. The insights that come out of AI often aren’t useful because the data is messy. And delays pile up — months go by before the business sees any value from its AI efforts. All of this creates frustration across teams and wastes valuable time, energy, and budget.

What You Can Do

The good news is this can be fixed. The first step is to stop thinking of data as just an IT issue. Data is a core part of the business plan; it powers the decisions you want AI to help you make. That means planning your data setup with your AI goals in mind. Companies that succeed here often rethink how their data is connected, explore modern ways to manage and govern it, and make sure the data is accurate, safe, and up to date.

When the foundation is solid, AI can finally do what it’s supposed to help your business grow smarter, faster, and stronger.

The Big Takeaway

Having data is just the start.

If AI isn’t delivering the value you hoped for, take a closer look at how your data is structured. Fix that, and you’ll unlock the real power of AI.

Data Silos and How They’re Holding Back AI

Everyone’s Investing in AI. But Few Are Ready for It.

I’ve been doing some research lately—talking to teams, reading up on AI use cases, and honestly just observing what’s working (and what’s not) in real-world scenarios.

There’s a pattern I keep seeing.

Everyone’s excited about AI. Companies are investing in platforms, training models, and talking a lot about what’s possible. But when it comes time to use AI to solve real problems?

They hit a wall.

And often, that wall is data silos.

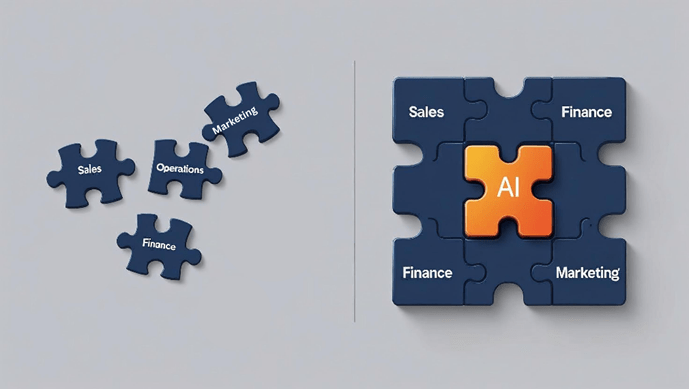

What Exactly Are Data Silos?

Data silos happen when information is scattered across tools, teams, or platforms—and no one’s sharing. It might not seem like a big deal at first. After all, each team knows their data, right?

But when your sales, finance, marketing, and ops data are living in separate worlds, disconnected and unaligned, your AI doesn’t stand a chance.

Even if your organization has all the right inputs, it’s like trying to build a puzzle with pieces from different sets.

Why Silos Kill AI Potential

AI isn’t magic. It needs clean, consistent, and connected data to work well.

When your data is fragmented:

- AI models start guessing based on partial info.

- Insights get buried in disconnected systems.

- Decision-making becomes slower and less confident.

Silos make it nearly impossible for AI to see the full picture. And when that happens, trust in AI outcomes erodes quickly across the organization.

The Fix? You Don’t Need to Start Over

Here’s the good news: solving this doesn’t mean ripping out your current systems.

The smarter approach is to build a unified data layer—a way to connect your existing sources without forcing everything into one giant warehouse. Tools like SAP Business Data Cloud do exactly this. They let your data stay where it is, but make it visible, governable, and usable across functions.

This kind of setup means your AI can finally pull from the full context—not just fragments.

Get Clear on Ownership and Definitions

Tech alone doesn’t fix the problem. You need clarity on who owns what data, how it’s maintained, and how it’s defined.

Something as simple as the word “customer” can mean five different things across teams—and that kind of inconsistency can throw off everything from sales forecasting to churn prediction.

Building shared definitions and clear ownership ensures your AI models are working with reality, not assumptions.

Bring AI Into the Business, Not Just the Lab

AI can’t sit on the sidelines. To create impact, it needs to be embedded directly into your workflows—supporting things like planning, demand forecasting, pricing, or customer retention.

This shift from AI as an experiment to AI as a core part of business operations is where real ROI begins to show up.

You Don’t Need More Data. You Need Better Data.

Most companies already have plenty of data. But it’s not about volume—it’s about how that data connects and flows.

That’s the real unlock for AI.

When your data is silo-free, your AI can move faster, learn faster, and deliver smarter insights that drive results.

Let’s Build a Smarter Foundation

At Tek Analytics, we help businesses go from siloed and stuck to smart and scalable—using data platforms that are built for AI success.

If your team is exploring how to make your data AI-ready, let’s have a conversation.

Because the best AI strategy? Starts with the right data foundation.

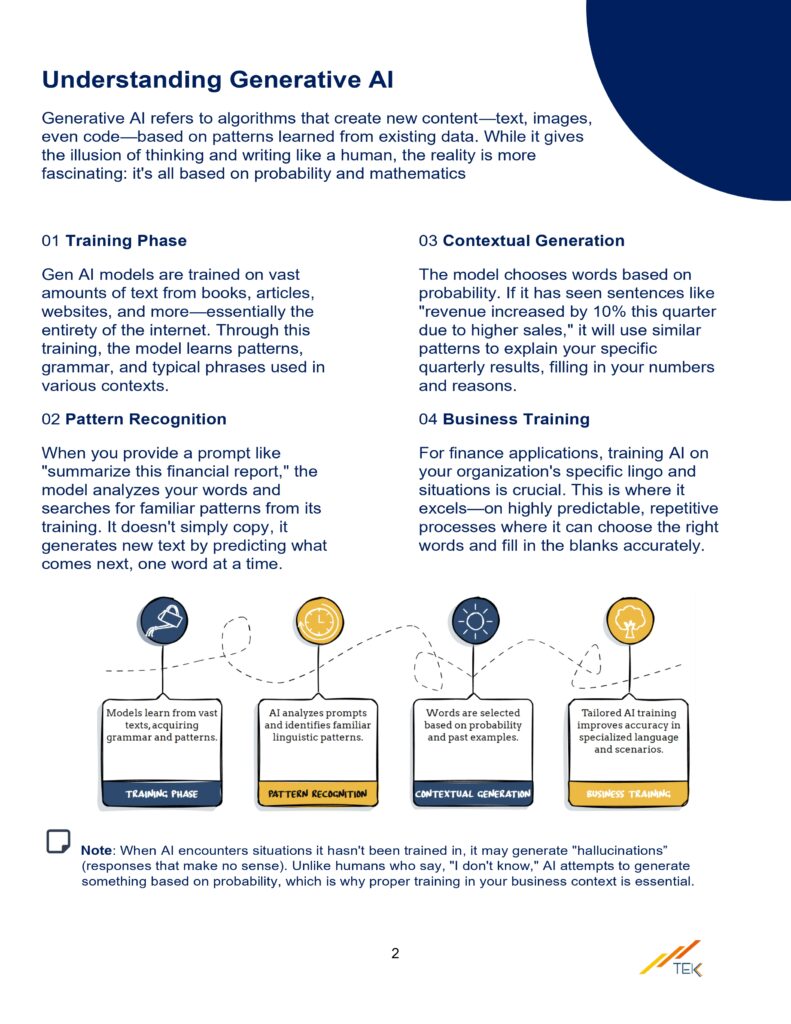

What Is Gen AI and Why Care

By – Sai Srinija Potnuru

What Is Generative AI and Why Should Organizations Care?

Let’s face it—we’re living in a world where AI isn’t just a sci-fi buzzword anymore. It’s real, it’s here, and it’s already changing how businesses operate—fast.

And at the heart of this shift? Generative AI (or GenAI, as we like to call it).

But what *is* it really? And more importantly—why should your organization care?

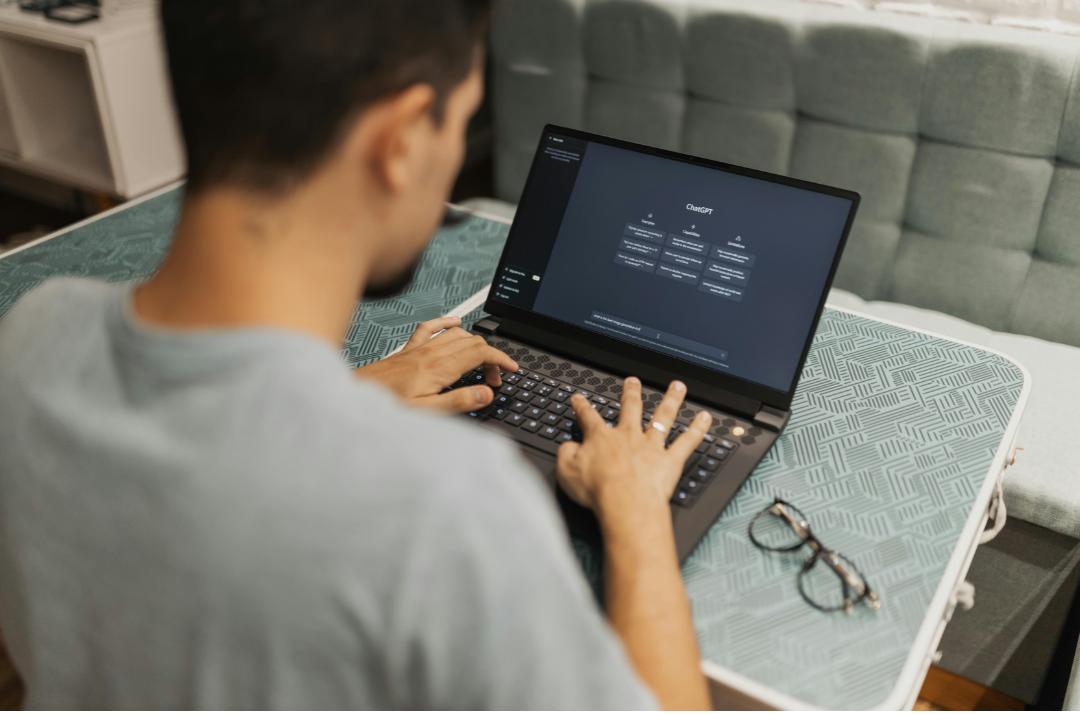

So… What Is GenAI?

GenAI is a type of artificial intelligence that doesn’t just analyze data—it *creates*. We’re talking text, images, code, video, audio, even 3D models. It’s the engine behind tools like ChatGPT, DALL·E, GitHub Copilot, and more.

Instead of just answering questions, GenAI collaborates. It learns from massive datasets and responds in human-like ways—drafting emails, summarizing reports, writing code, and generating ideas that honestly feel… creative.

In short? GenAI is a productivity booster that helps people get more done—faster, smarter, and with more impact.

Why Should Your Org Care?

Because GenAI isn’t just another tech trend. It’s a fundamental business shift. And if your competitors aren’t already testing it out… they will be soon.

Here’s what GenAI is bringing to the table:

1. Things Get Done—Way Faster GenAI cuts hours off repetitive tasks. Instead of manually formatting that 20-page report? Let AI do the heavy lifting, so your team can focus on the good stuff—like strategy and innovation.

2. Creativity Gets a Major Upgrade Need a dozen ad copy variations? Personalized emails for different customer segments? A fresh product description that actually stands out?

Done, done, and done. GenAI acts like a creative partner—not a replacement—but a powerful one.

3. Personalization at Scale This is where GenAI really shines. It helps you deliver customized experiences to thousands of people—without losing the human touch. Smarter bots, adaptive learning, AI-curated recommendations… even at enterprise scale, your users will feel like you’re speaking directly to them.

Real-World Use Cases (That Aren’t Just Hype)

Still feels a bit abstract? Let’s talk about what this looks like in the real world:

- Retail: Personalized promotions, 24/7 human-sounding support, auto-generated product descriptions

- Finance: AI-driven risk summaries, plain-language policy explanations, market trend recaps

- Manufacturing: Auto-generated manuals, predictive maintenance alerts, real-time scenario planning

This isn’t “someday” stuff. It’s happening *right now*—and it’s working.

So… What’s the Catch?

With great power comes—yep, you guessed it—responsibility.

GenAI is powerful, but it needs strong governance. Ethics, bias monitoring, data privacy, transparency… these aren’t optional. They’re essential for building trust and staying compliant.

That’s why leading orgs are investing in AI Centers of Excellence, building responsible AI frameworks, and upskilling their teams to lead the charge.

Our Take? You Don’t Have to Be First—But You Can’t Be Last

GenAI isn’t a passing trend. It’s a tectonic shift in how work gets done.

Organizations that start exploring now will shape the future. The ones that wait? They’ll be catching up in a world that’s already moved on.

Whether you’re just curious or ready to scale, now’s the time to start asking the big questions about how GenAI can support your business.

Because the real question isn’t *if* you’ll use GenAI… it’s *how soon* you’ll make it work for you.

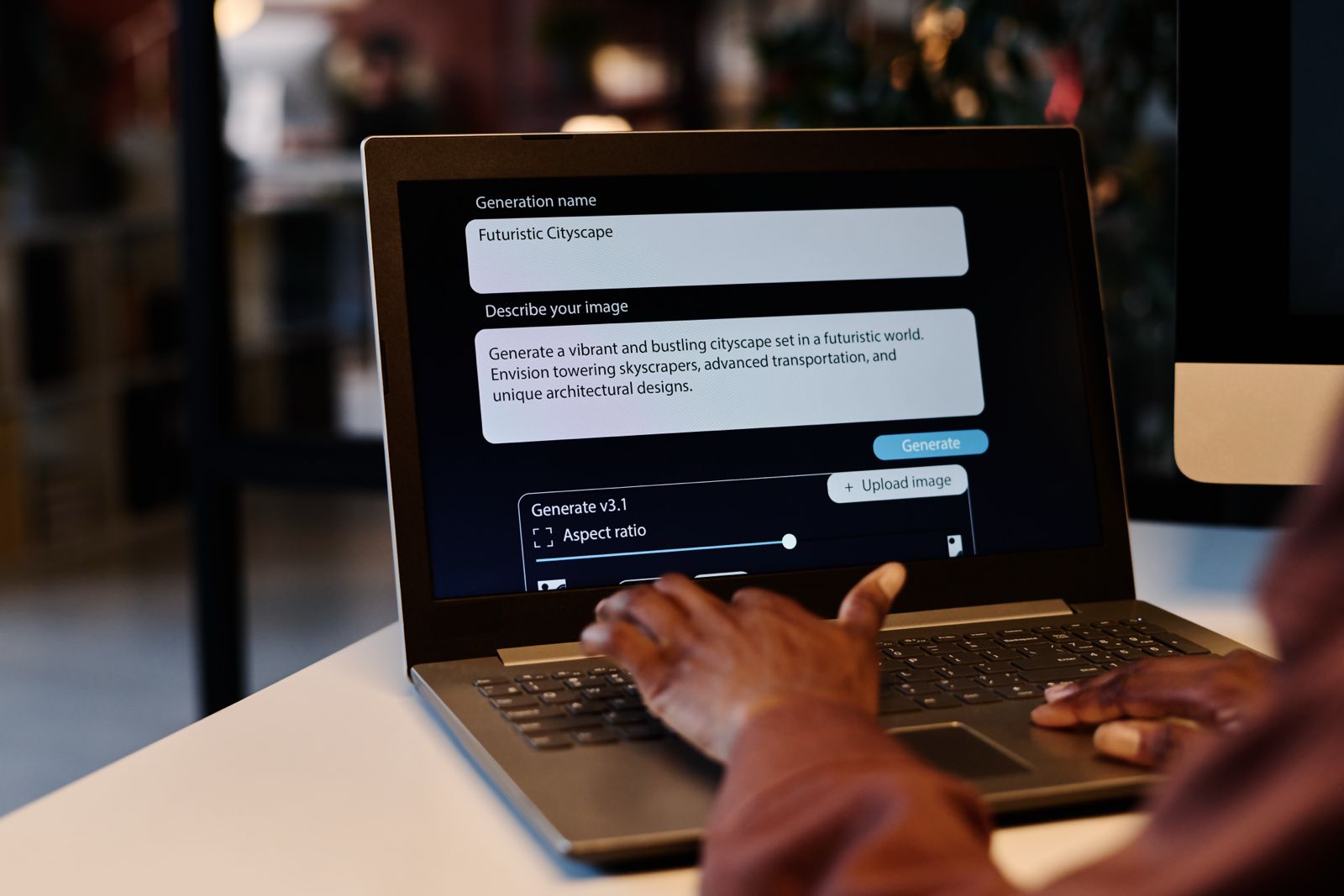

How to Make Gen AI Work for You

By -Srinija Potnuru

Hey!

Srinija here—I’m part of the team at Tek Analytics. Lately, I’ve been diving deep into Generative AI—reading up, watching demos, and, honestly, the coolest part? Seeing it come to life in the work we’re doing with our clients. Between helping businesses get started and watching my team work their magic, I’ve learned a lot.

It’s everywhere right now, right? Feels like everyone’s talking about how AI is going to change business forever. And from what I’ve seen—it’s not just talk.

And honestly? It’s true. AI is changing the game. I’ve seen it firsthand.

But… (and this is a big but), getting AI to work in your business? That part’s not always so simple.

I’ve had many conversations where people tell me:

“Srinija, we know we need AI, but we don’t know where to start.”

Or, “We tried a small AI project, and it kind of worked… but now what?”

And, of course, “How do we make sure we don’t screw up the security or break some compliance rule?”

If any of this sounds familiar—you are not alone. I see it all the time.

What I’ve Noticed – The Real Struggles with Generative AI

From everything I’ve read, watched, and experienced through my work, I’ve noticed that most businesses run into the same roadblocks when it comes to AI.

The first is just figuring out what to do with it.

AI is this big, exciting thing, and everyone’s talking about it. But when it’s time to apply it to your business, you can get stuck. It’s like, “Okay, cool… but what problem is AI actually going to solve for us?” I’ve seen companies go all-in on AI tools without a plan, and they end up frustrated because it didn’t really make a difference.

Then, there’s the part where you try AI in one area, and it works… kind of.

Maybe you automate a task here or streamline a process there. It’s great—but it’s just that one thing. I’ve seen businesses get stuck in this “pilot phase” where AI is only helping in one corner of the company. They want to scale it up, but connecting AI to all their systems—especially the older ones—feels like trying to mix oil and water.

And honestly? Data and security worries are huge.

This one comes up in every single meeting. “How do we protect our data? What if we accidentally share something sensitive? Are we even allowed to do this under our industry rules?” I’ve seen businesses hesitate to move forward with AI just because they’re nervous about getting it wrong—and I don’t blame them.

How We Make AI Actually Work – What We Do at Tek Analytics

This is exactly why, at Tek Analytics, we’ve built a way to help businesses get AI working—without the confusion or guesswork.

We don’t just throw some AI tools at you and say, “Good luck!” We collaborate with you, side by side.

The first thing we always do is sit down and talk—really talk. We call it our AI Innovation Clinic, but honestly, it’s more like a brainstorming session. We dig into what’s working in your business, what’s not, and where AI can help. I’ve had so many of those “Oh, we didn’t even think of that!” moments in these chats.

Once we know what’s right for you, we help you get started—properly.

I’ve seen businesses rush into AI, skip the basics, and hit roadblocks later. That’s why we created AI Jump Start—to set up secure, compliant AI solutions that work with your team and systems, from day one.

And when you’re ready to scale? We’ve got you.

With AI Elevate, we help you move from a few small wins to AI powering your business. We will automate more, fine-tune your models, and make sure AI is driving real, long-term value—without the headaches.

Oh, and this is the part we’re really excited about—we’ve just launched our Rapid Deployment Solutions!

Sometimes you need AI up and running fast. We get that. These solutions are designed to get you started quickly, so you can see results in weeks—not months—without cutting corners on security or quality. We have already seen how much of a game-changer this can be, and I can’t wait to see what it does for more businesses like yours.

Why I Think We’re Different

One thing I’ve noticed that really sets us apart at Tek Analytics is that we don’t do this cookie-cutter, one-size-fits-all AI stuff.

Every business is different, and we get that. We make sure whatever AI solution we’re helping you with fits into your business, with your people, and your systems.

Plus—this is a big one—we take governance and security seriously. We’re not just excited about AI; we’re also the people making sure you’re staying compliant and not putting your data at risk.

I’ve seen businesses underestimate this part, and it can cause major headaches later. We make sure you don’t have to worry about that.

Let’s Chat?

So yeah, AI can be overwhelming—I get it. But it doesn’t have to be.

With the right approach (and the right partner), it can be pretty smooth—and, honestly, kind of exciting.

If you’re curious, or even if you’re just feeling stuck and want to bounce around some ideas, let’s talk. I’m always happy to share what I’ve seen work.

And if you want to check out more about how we help businesses with Generative AI, here’s the link:

Tek Analytics Generative AI Services

Solving Legacy BI Headaches for a Global Distributor

By – Merri Beckfield

From my vantage points, I have the privilege of observing organizations at varying points in their journey to data insights. And for SAP customers who have been waiting for the right moment for SAP Datasphere, there is now a focus on starting the journey.

Today, I’d like to share a bit about the journey of a supply chain management and logistics solutions provider who went all in on modernizing their SAP BI solutions.

THE PROBLEM

When we first started together, they were an SAP ECC shop with an on-premises, legacy BW data warehouse solution with over 500 BEX queries. Users were sourcing data from a variety of systems for reporting and decision making. On the front end, SAP Business Objects and Tableau were in the mix. This is certainly a very common scenario.

With their legacy BI solution, the organization was facing some common challenges. They were struggling to achieve the necessary speed and cost-effective storage needed for their operations. Additionally, the lack of integration resulted in the loss of SAP context for data stored in third-party databases, further complicating their data management efforts.

THE SOLUTION

To overcome the challenges, together we came up with a strategy and plan to seize-the-moment with an upcoming S/4 implementation. Rather than rework the legacy BI solutions to fit S/4 into the picture, the team took the opportunity to replace the existing BI solution with an all-in move to SAP Datasphere. To mitigate risk, a “joint success plan” was put into place which laid out a phased migration (with a pilot) approach. This allowed the team to work in an agile fashion, apply learnings along the way, and reap some business benefits along the way.

This all involved fully implementing a 3-tier Datasphere landscape, along with BW bridge. To be honest, we learned a few things about BW bridge (and its limitations) along the way! The data and data flows were fully migrated from BW to Datasphere in a manner that allowed us to take advantage of the work previously done in BW (why reinvent the wheel?). Security was fully integrated into the new solution. The queries for the front end were updated to the new solution. And a data lake solution was put into place for cost-effective data storage.

THE RESULTS

At the end of the day, the outcome was a solution that provided:

- Enhanced agility and scalability vs. their prior solution.

- Streamlined data access by integrating SAP and non-SAP data sources

- Real-time integration implemented to S/4 Hana for faster time to insight.

Ready to move your data strategy forward and unlock the potential of SAP Datasphere? This client success story is just one example of how we help businesses modernize their data architectures, streamline operations, and drive faster decision-making.

Let us help you achieve the same success! Contact us today to discuss how we can support your migration to SAP Datasphere. Contact TEK – Tek Digital Transformations